Gaze program: stereo vision will help courier robots navigate in the fog

- Статьи

- Internet and technology

- Gaze program: stereo vision will help courier robots navigate in the fog

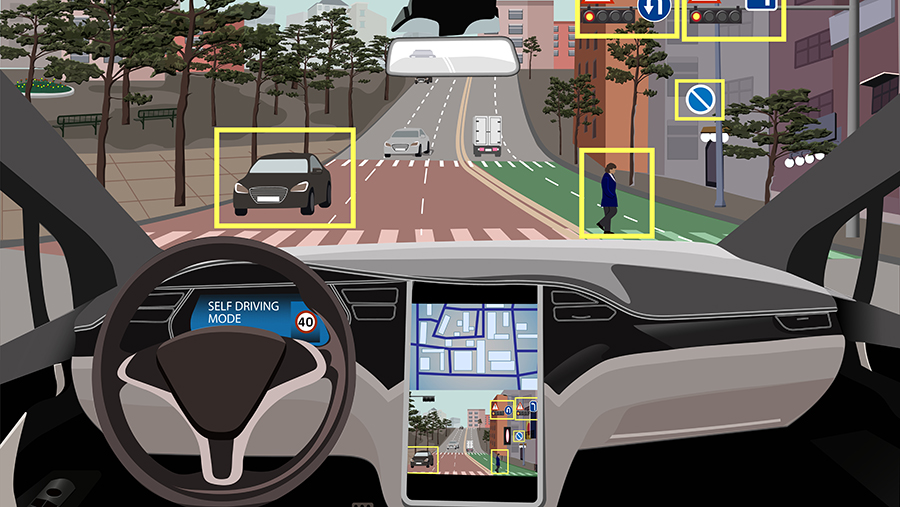

Russian scientists have created a computer stereo vision system that allows you to estimate the distance to objects using a video camera without using additional sensors. Its main advantage is stable operation in difficult conditions, such as fog or dense foliage. The technology can be used in various types of autonomous vehicles, from unmanned taxis to robot storekeepers. According to experts, this approach is economically beneficial because it allows you to avoid installing a large number of sensors. However, its practical application requires an extensive data sample for training neural networks.

Stereo vision system

MIPT specialists, together with foreign colleagues, have developed the Un-ViTAStereo stereo vision technology, which estimates the distance to objects without using expensive lidars and manual image marking. The system is effective where modern algorithms fail — in front of smooth walls, in dense foliage or fog. It recognizes the shadows of objects and takes perspective into account, which makes it applicable in self-driving cars and autonomous robots.

To improve accuracy, the scientists used a "mentor" model, Depth Anything V2, capable of estimating relative depth from a single camera image. It does not measure distances in meters, but it almost accurately determines which objects are closer and which are further away, taking into account shadows, perspective and overlap. The learning algorithm selects only those predictions of the stereo system that match the hints of the "mentor", and based on them increases the accuracy of the neural network.

— The Depth Anything V2 model constantly transmits hints to the stereo system. For example, "I do not know how many meters this car is closer than a tree, but it is definitely closer, and the boundary between them should be sharp" or "on this wall, where there is no contrast, the depth should change smoothly," said Alexander Dvorkovich, project manager of the MIPT Scientific and Technical Telecommunications Center.

Stereos of robots and self-driving cars build a three-dimensional map of the world similar to human visual perception. But they use cameras instead of eyes, and algorithms instead of brains. However, this mechanism does not work in all conditions.: When encountering a perfectly white wall or an area with repeating patterns, the algorithm lacks visual landmarks to correctly match the images. Previously, manual marking of objects with an indication of the exact distance was used for such cases, but it is not always effective. The new technology allows us to solve this problem.

— The system has already been tested on standard datasets, and Un-ViTAStereo has shown absolute superiority among its analogues. For example, in the KITTI 2015 drone test, the proportion of gross errors was reduced to 5%. This means that when driving, the number of dangerous errors in determining the distances to objects — curbs or pedestrians — will decrease by 23%," said Alexander Dvorkovich.

Based on Un-ViTAStereo, scientists plan to create a self—learning neural network capable of adapting to the peculiarities of various environments - from city streets to factory floors. In addition, it is planned to use rare but accurate lidar measurements as "super beacons" for training, which will further improve the accuracy of the system.

Practical application

The development can find wide application — from unmanned vehicles, agriculture, warehouse robotics to monitoring systems, security and UAVs, says Alice Sotnikova, market expert at NTI Neuronet, deputy CEO of robot manufacturer Degree of Freedom.

— The development is aimed at reducing the number of hardware tools for determining the distance to objects. A lot of work has been done with blind spots. The proposed method allows you to correctly determine the depth in these problem areas, where other methods fail," she said.

This stereo vision reduces the industry's dependence on expensive lidars and time-consuming data markup. It is especially valuable that the algorithm works stably in difficult conditions — on homogeneous surfaces, in conditions of fog or visual noise, where classical models lose accuracy or give depth gaps, says Yaroslav Seliverstov, a leading expert in the field of AI at University 2035.

— Using an "intelligent advisor" for a stereo system actually brings machine perception closer to human perception, when it is not absolute meters that are important, but correct relative connections and boundaries of objects. From a practical point of view, this can significantly improve the reliability of autonomous driving systems, especially in urban environments with poor visibility and complex geometry of the road network," the expert said.

The solution reduces dependence on expensive sensors, but does not completely eliminate the need for metrically accurate data sources and remains sensitive to the specifics of training samples, said Ruslan Permyakov, Deputy director of the NTI Competence Center for Trusted Interaction Technologies based on TUSUR.

— In general, this is not a radically new solution, but a qualitative development of existing approaches, closing one of the most problematic classes of errors in 3D perception tasks. Imagine that a robot or an unmanned vehicle looks at the world almost like a human — it doesn't just capture a picture, but understands which is closer, which is further away, even if there is a smooth wall, thick fog or thickets of trees in front of it. This is exactly what the new technology allows us to do," the expert said.

According to Sergey Shavetov, associate professor at the Faculty of Control Systems and Robotics at ITMO University, the development will be useful for driver assistance systems, as well as for "sensitizing" unmanned autonomous robots, including flying vehicles. The technology may also find applications in robot couriers and unmanned taxis.

Переведено сервисом «Яндекс Переводчик»